My very first job was on the Google Maps API, which was the world's most popular API at the time. We were still figuring out what made up a good API at the time, so we experimented with things like API keys, versioning, and auto-generated docs. We even created Google's first API quota system for rate-limiting keys, which was later used by all of Google's APIs. A lot of work went into creating a fully featured API!

That's why I love services like Azure API Management, since it makes it possible for any developer to create a fully featured API. (Other clouds have similar features, like AWS API Gateway and Google API Gateway). You can choose whether your API requires keys, then add API policies like rate-limiting, IP blocking, caching, and many more. At the non-free tier, you can also create a developer portal to receive API sign-ups.

That's also why I love the FastAPI framework, as it takes care of auto-generated interactive documentation and parameter validation.

I finally figured out how to combine the two together, along with Azure Functions as the backend, and that's what I want to dive into today.

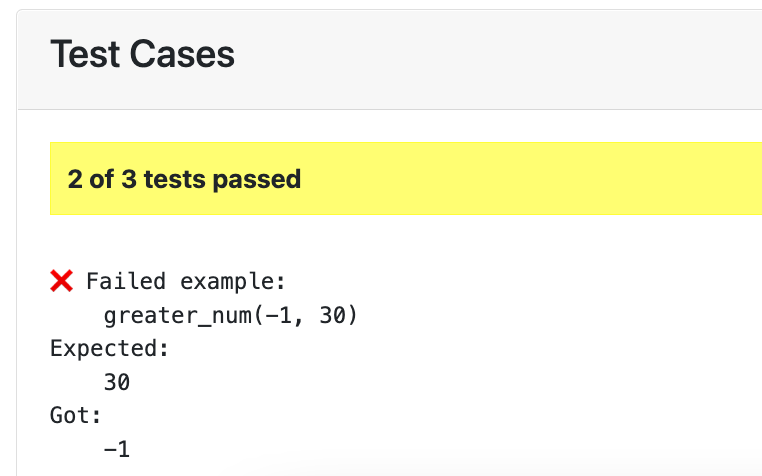

This diagram shows the overall architecture:

Public vs Protected URLs

One of my goals was to have the documentation be publicly viewable (with no key) but the FastAPI API calls themselves require a subscription key. That split was the trickiest part of this whole architecture, and it started at the API Management level.

The API Management service consists of two "APIs" (as it calls them):

- "simple-fastapi-api": This API is configured with

subscriptionRequired: trueandpath: 'api'. All calls that come into the service prefixed with "api/" will get handled by this API. - "public-docs" This API isn't really an API, it's the gateway to the documentation and OpenAPI schema. It's configured with

subscriptionRequired: falseandpath: 'public'.

The API Gateway uses the path prefixes to figure out how to handle the calls, but then it calls the API backend with whatever comes after the path.

| This API Gateway URL | calls this FastAPI URL: |

|---|---|

| "/api/generate_name" | "/generate_name" |

| "/public/openapi.json" | "/openapi.json" |

| "/public/docs" | "/docs" |

But that leads to a dreaded problem that you'll find all over the FastAPI issue tracker: when loading the docs page, FastAPI tries to load "/openapi.json" when it really needs to load "/public/openapi.json".

Fortunately, we can improve that by specifying root_path in the FastAPI constructor:

app = fastapi.FastAPI(root_path="/public")Now, the docs will load successfully, but they'll claim the API calls are located at "/public/..." when they should be at "/api/...". That's fixed by another change to the FastAPI constructor, the addition of servers and root_path_in_servers:

app = fastapi.FastAPI(

root_path="/public",

servers=[{"url": "/api", "description": "API"}],

root_path_in_servers=False,

)The servers option changes the OpenAPI schema so that all API calls are prefixed with "/api", whereas root_path_in_servers removes "/public" as a possible prefix for API calls. If that argument wasn't there, the FastAPI docs would present a dropdown with both "/api" and "/public" as options.

Since I only need this configuration when the API is running in production behind the API Management service, I setup my FastAPI app conditionally based on the current environment:

if os.getenv("FUNCTIONS_WORKER_RUNTIME"):

app = fastapi.FastAPI(

servers=[{"url": "/api", "description": "API"}],

root_path="/public",

root_path_in_servers=False,

)

else:

app = fastapi.FastAPI()It would probably also be possible to run some sort of local proxy that would mimic the API Management service, though I don't believe Azure offers an official local APIM emulator.

Securing the function

My next goal was to be able to do all of this with a secured Azure function, i.e. a function with an authLevel: 'function'. A secured function requires a "x-functions-key" header to be sent on every request, and for the value to be one of the key values in the function configuration.

Fortunately, API management makes it easy to always send a particular header along to an API's backend. Both of the APIs shared the same function backend, which I configured in Bicep like this:

resource apimBackend 'Microsoft.ApiManagement/service/backends@2021-12-01-preview' = {

parent: apimService

name: functionApp.name

properties: {

description: functionApp.name

url: 'https://${functionApp.properties.hostNames[0]}'

protocol: 'http'

resourceId: '${environment().resourceManager}${functionApp.id}'

credentials: {

header: {

'x-functions-key': [

'{{function-app-key}}'

]

}

}

}

}But where does {{function-app-key}} come from? It refers to a "Named Value", a feature of API Management, which I configured like so:

resource apimNamedValuesKey 'Microsoft.ApiManagement/service/namedValues@2021-12-01-preview' = {

parent: apimService

name: 'function-app-key'

properties: {

displayName: 'function-app-key'

value: listKeys('${functionApp.id}/host/default', '2019-08-01').functionKeys.default

tags: ['key' 'function' 'auto']

secret: true

}

}I could have also set it directly in the backend, but it's nice to make it a named value so that we can denote it as a value that should be kept secret.

The final step is to connect the APIs to the backend. Every API has a policy document written in XML (I know, old school!) One of the possible policies is set-backend-service which can be set to an Azure resource ID. I add the policy XML to both the APIs:

<set-backend-service id="apim-generated-policy" backend-id="${functionApp.name}" />And that does it! See all the Bicep for the API Management in apimanagement.bicep.

All together now

The two trickiest parts were the API/docs distinction and passing on the function key, but there were a few other interesting aspects as well, like testing all the code (100% coverage!) and setting up the project to work with the Azure Developer CLI.

Here's a breakdown of the API code:

- __init__.py: Called by Azure Functions, defines a

mainfunction that usesASGIMiddlewareto call the FastAPI app. - fastapi_app.py: Defines a function that returns a FastAPI app. I used a function for better testability.

- fastapi_routes.py: Defines a function to handle an API call and uses

fastapi.APIRouterto attach it to the "generate_name" route. - test_azurefunction.py: Uses Pytest to mock the Azure Functions context, mock environment variables, and check all the routes respond as expected.

- test_fastapi.py: Uses Pytest to check the FastAPI API calls.

- functions.json: Configuration for Azure Functions, declares that this function responds to an HTTP trigger and has a wildcard route.

- local.settings.json: Used by the local Azure Functions emulator to determine the function runtime.

There's also a lot of interesting components in the Bicep files.

I hope that helps anyone else who's trying to deploy FastAPI to this sort of architecture. Let me know if you come up with a different approach or have any questions!